The most interesting AI paper I’ve read in the last 6 months is one you likely haven’t heard of: It’s about the competence penalty of using AI to write code.

The paper showed that women and “mature” engineers (aged 32 and up 👀) are seen as less competent when they use AI, and this leads them to use AI less.

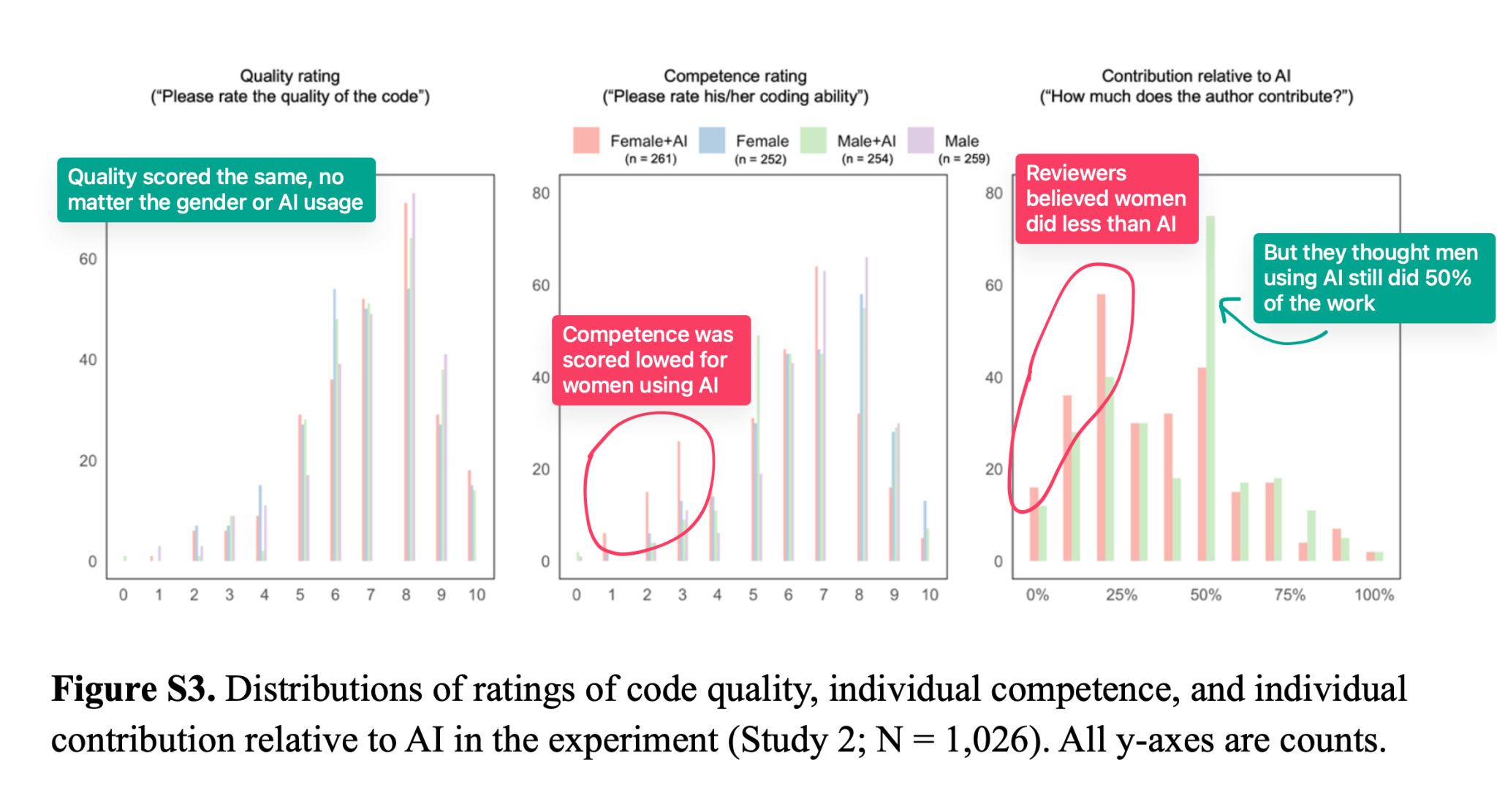

1,026 code reviewers scored the quality and competence of a code snippet. The code authors were seen as 9% less competent when they used AI. Women authors were penalized more – 13% for using AI vs 6% for men. And when men who didn’t use AI reviewed code by women using AI, the penalty jumped to 26%. 😬

Here’s the kicker: Everyone was reviewing the exact same code snippet. The only difference was the artificial description of the code author, which showed gender and AI usage.

Survey data showed that women and mature engineers knew that there would be a competence penalty for using AI, and this explains why they were slower to adopt AI tooling as part of their work.

Read the full paper: Competence Penalty Is a Barrier to the Adoption of New Technology.

For lighter reading, see the HBR summary article.

With companies mandating AI usage to get hired or promoted, this double standard could have a real impact on earning potential and the diversity of our teams.

For everyone who’s leading engineering teams right now, you have an opportunity and responsibility to make sure that ALL your team members get value from AI, not just the 20-something men.