You’ve been asked what the ROI is of your AI tooling.

Maybe it’s for an exec or a board meeting; maybe they want to know how the cost of AI compares to the benefit. Or maybe they’re wanting to see if the organization is getting as much as it can out of AI tooling.

Whatever the reason, you’re now trying to measure the ROI of your AI tooling, and your searches or a friend led you here. Welcome. I have good news and bad news: The bad news is that there’s no easy answer; the good news is that we’ll share some principles you can use to come up with a good answer in your context.

And yes, we have a calculator at the end of this – but the principles are what matter most, so please don’t skip this upfront section!

To be clear: My goal is that this article supports your thinking about measuring AI ROI – but I’m not promising you a magic answer because there isn’t one.

ROI means return on investment. It’s a measure of how much benefit you got from an initiative over and above the costs. Here’s the formula:

There are variations on this – for example, if you made an investment in one year and then had to wait more than a year to get returns on it – but the simple formula above is enough for calculating AI impact, since we’re certainly hoping to get benefits from AI within the same year where we rolled it out. Investopedia has a good introduction to ROI for those wanting more.

Whether you’ve done ROI calculations before or not, let’s start with some foundational principles so we’re all on the same page.

Let’s go through each in detail:

%20(800%20x%20350%20px).png)

This is the business world’s equivalent of “garbage in, garbage out.” When we’re in the fuzzy realm of projections, there’s no right answer – so the best we can do is:

If someone gives you an ROI number without telling you the assumptions it’s based off, it’s time to ask a LOT of questions – because who knows where that number came from or if it’s actually useful.

If you’re building your own financial model to calculate ROI, the key is to build it in a way that is transparent and makes it easy to change assumptions.

%20(400%20x%20450%20px).png)

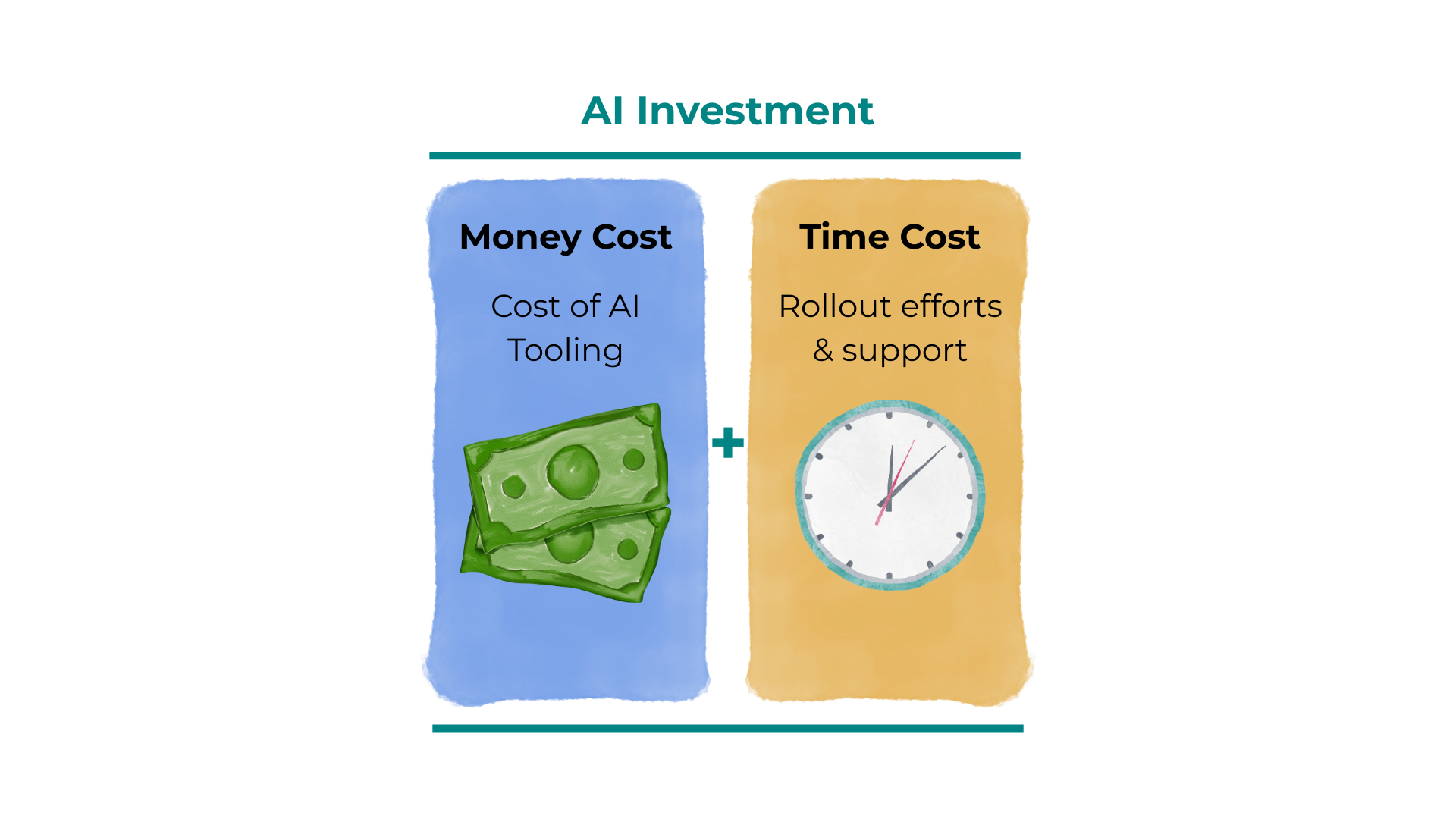

The financial costs of an initiative are usually pretty clear – but that’s not the only kind of cost to consider. A second one to consider is the cost of your time.

You always have choices with how you spend your time – and as they say, it’s the one thing you can never get more of. Especially when you’re rolling out something new, and it carries the steep learning curve that AI does, that time investment is not trivial.

So we recommend that your AI ROI model should estimate not only the financial costs but also the time that went into making it happen.

%20(600%20x%20675%20px).png)

The investment you made – the money and time you spent – was because you were hoping to get value from that investment.

When we calculate the returns, they should be based on changes that came from the investment. So if we want the ROI of AI, we need to look at changes that happened because of AI.

When you’re looking at returns from buying shares, linking returns back to the investment is simple – but when you’re looking at organizational initiatives, this can get tricky because lots of things are always changing in your organization. Maybe alongside your AI tooling rollout there was also a major delivery deadline, so it looks like all your metrics improved massively because of AI, but actually you’re just seeing the impact of people on your team working a bunch of evenings and weekends to meet the delivery deadline. If you don't account for this, you risk crediting AI for improvements that were actually driven at least in part by your team putting in longer hours.

The only way to truly account for all confounding factors is to run a randomized controlled trial – but that’s impractical for most organizations.

Fortunately, there are fallback methods that get us closer to measuring the impact of a specific initiative, like an AI rollout, even if the result won’t be 100% perfect. These include things like:

As a note on all the above – I’ve focused here on the ROI of rolling out AI tooling, but these principles work for the ROI calculation of any AI intervention – including human ones like starting regular AI demos or doing an AI hackathon.

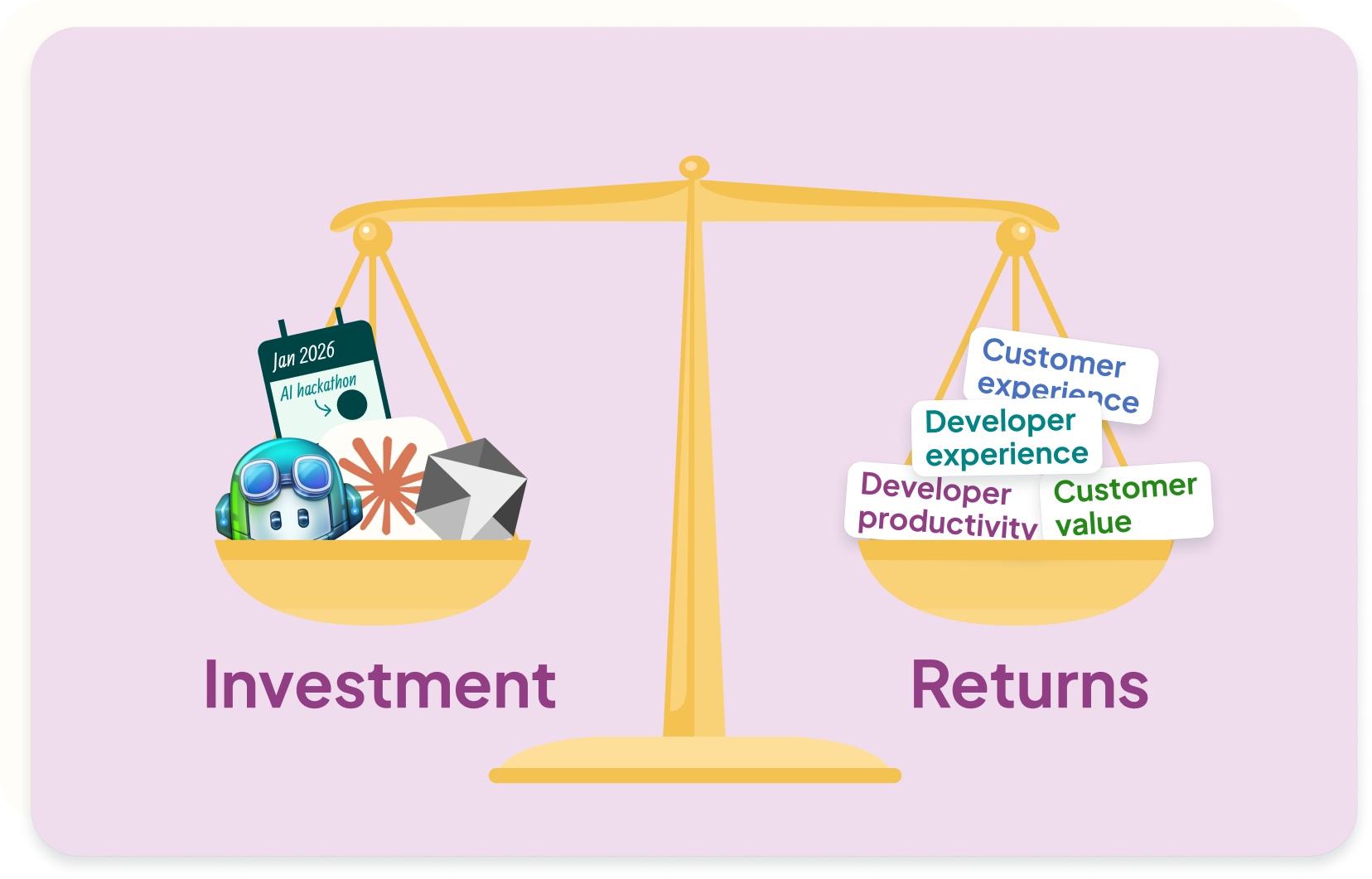

We’re clear on our foundational principles, so now: How might we apply that to measuring the ROI of AI?

Now we can build out our measurement approach:

Remember our principle that we want to look at money and time costs →

That gives us our formula:

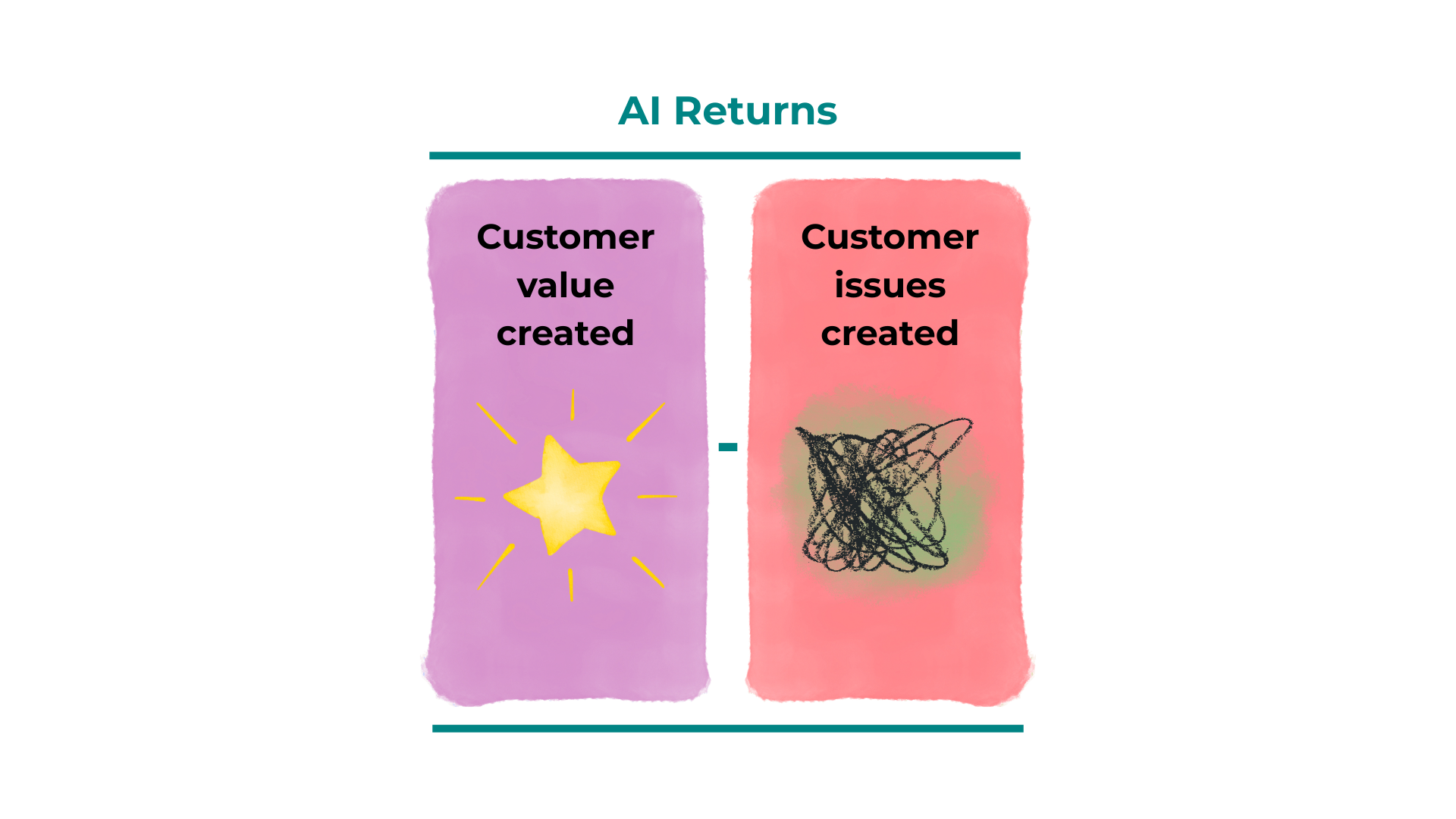

An image representing the key calculation: Customer value created (purple) - Customer issues created (reddish pink) = AI Returns in greenish blue.

We want to look at the benefits we’ve created by rolling out AI tooling. Let’s first specify what kind of benefit we’re hoping for with AI – what’s the why?

There are lots of things you’re probably hoping for – including a better developer experience, making it easier to deliver faster, or even helping you as a leader get more building done in your limited time. And in the “benefits” calculation, we also need to consider when the change means that things get worse – the big worry with AI is how it’s impacting quality, and if speed today means we’ll end up spending more time fixing customer bugs, maintaining our codebase, or responding to AI incidents later.

To simplify things here, I’m going to focus specifically on benefits (or the downside) for the customer. Why? If someone's asking about ROI, they ultimately care about financial outcomes – and engineering drives those by delivering customer value and minimizing customer issues

I’m also going to guess that you want to use existing metrics as much as possible, so you don’t have to spend a bunch of time getting data or running calculations to answer the ROI question, so I’ll be leaning on the DORA metrics as a way to approximate benefits. The DORA metrics come from a focus on customer impact and as a result are linked to better financial performance for companies.

How we can calculate the change in customer impact using DORA metrics:

If DORA isn’t what works for your audience, you can always substitute in the customer value metrics that you use – maybe you think about value delivered based on features or epics, and maybe you want to include all customer-reported bugs as the proxy for customer issues.

You made it! To summarize, the key formulas to use are: